$1.2M NSF Award to Study Privacy in the Context of Wearable Cameras

PIs Apu Kapadia and David Crandall at IU, and Denise Anthony at Dartmouth College, have received a $1.2M collaborative NSF award (IU Share: $800K) to study privacy in the context of wearable cameras over the next four years. The ubiquity of cameras, both traditional and wearable, will soon create a new era of visual sensing applications, raising significant implications for individuals and society, both beneficial and hazardous. This research couples a sociological understanding of privacy with an investigation of technical mechanisms to address these needs. Issues such as context (e.g., capturing images for public use may be okay at a public event, but not in the home) and content (are individuals recognizable?) will be explored both on technical and sociological fronts: What can we determine about images, what does this mean in terms of privacy risk, and how can systems protect against risk to privacy?

PIs Apu Kapadia and David Crandall at IU, and Denise Anthony at Dartmouth College, have received a $1.2M collaborative NSF award (IU Share: $800K) to study privacy in the context of wearable cameras over the next four years. The ubiquity of cameras, both traditional and wearable, will soon create a new era of visual sensing applications, raising significant implications for individuals and society, both beneficial and hazardous. This research couples a sociological understanding of privacy with an investigation of technical mechanisms to address these needs. Issues such as context (e.g., capturing images for public use may be okay at a public event, but not in the home) and content (are individuals recognizable?) will be explored both on technical and sociological fronts: What can we determine about images, what does this mean in terms of privacy risk, and how can systems protect against risk to privacy?

Read more about this grant, and our project. Here is a 90-second video!

Our Work on Community-Enhanced Deanonymization to Appear at CCS 2014

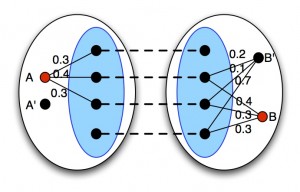

Researchers have shown how ‘network alignment’ techniques can be used to map nodes from a reference graph into an anonymized social-network graph. These algorithms, however, are often sensitive to larger network sizes, the number of seeds, and noise~— which may be added to preserve privacy. We propose a divide-and-conquer approach to strengthen the power of such algorithms. Our approach partitions the networks into ‘communities’ and performs a two-stage mapping: first at the community level, and then for the entire network. Through extensive simulation on real-world social network datasets, we show how such community-aware network alignment improves de-anonymization performance under high levels of noise, large network sizes, and a low number of seeds. Read more in our paper, which will be presented at ACM CCS 2014.

Our Work on Privacy Behaviors of Lifeloggers to Appear at UbiComp 2014

A number of wearable ‘lifelogging’ camera devices have been released recently, allowing consumers to capture images and other sensor data continuously from a first-person perspective. While lifelogging cameras are growing in popularity, little is known about privacy perceptions of these devices or what kinds of privacy challenges they are likely to create. To explore how people manage privacy in the context of lifelogging cameras, as well as which kinds of first-person images people consider ‘sensitive,’ we conducted an in situ user study (N = 36) in which participants wore a lifelogging device for a week. Our findings indicate that: 1) some people may prefer to manage privacy through in situ physical control of image collection in order to avoid later burdensome review of all collected images; 2) a combination of factors including time, location, and the objects and people appearing in the photo determines its ‘sensitivity;’ and 3) people are concerned about the privacy of bystanders, despite reporting almost no opposition or concerns expressed by bystanders over the course of the study. Read our paper or visit our project page.

A number of wearable ‘lifelogging’ camera devices have been released recently, allowing consumers to capture images and other sensor data continuously from a first-person perspective. While lifelogging cameras are growing in popularity, little is known about privacy perceptions of these devices or what kinds of privacy challenges they are likely to create. To explore how people manage privacy in the context of lifelogging cameras, as well as which kinds of first-person images people consider ‘sensitive,’ we conducted an in situ user study (N = 36) in which participants wore a lifelogging device for a week. Our findings indicate that: 1) some people may prefer to manage privacy through in situ physical control of image collection in order to avoid later burdensome review of all collected images; 2) a combination of factors including time, location, and the objects and people appearing in the photo determines its ‘sensitivity;’ and 3) people are concerned about the privacy of bystanders, despite reporting almost no opposition or concerns expressed by bystanders over the course of the study. Read our paper or visit our project page.

Google Research Award

PIs Kapadia and Crandall have received a 2014 Google Research Award for their research on privacy in the context of ‘lifelogging’ wearable cameras. We expect that these wearable cameras (see the Narrative Clip and the Autographer in addition to Google Glass) will become commonplace within the next few years, regularly capturing photos to record a first-person perspective of the wearer’s life. The goal of this project is to investigate and build automatic algorithms to organize images from lifelogging cameras, using a combination of computer vision and analysis of sensor data (like GPS, WiFi, accelerometers, etc.), thus empowering users to efficiently manage and share these images in a way that protects their privacy. As a first step, we proposed PlaceAvoider, an approach for recognizing (and avoiding) sensitive spaces within images. Read an article about this work by the MIT Technology Review. Read more about our project here.

PIs Kapadia and Crandall have received a 2014 Google Research Award for their research on privacy in the context of ‘lifelogging’ wearable cameras. We expect that these wearable cameras (see the Narrative Clip and the Autographer in addition to Google Glass) will become commonplace within the next few years, regularly capturing photos to record a first-person perspective of the wearer’s life. The goal of this project is to investigate and build automatic algorithms to organize images from lifelogging cameras, using a combination of computer vision and analysis of sensor data (like GPS, WiFi, accelerometers, etc.), thus empowering users to efficiently manage and share these images in a way that protects their privacy. As a first step, we proposed PlaceAvoider, an approach for recognizing (and avoiding) sensitive spaces within images. Read an article about this work by the MIT Technology Review. Read more about our project here.

Our Work on Exposure Feedback to Appear at CHI 2014

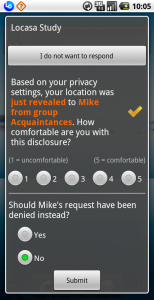

Owing to the ever-expanding size of social and professional networks, it is becoming cumbersome for individuals to configure information disclosure settings. We used location sharing systems to unpack the nature of discrepancies between a person’s disclosure settings and contextual choices. We conducted an experience sampling study (N = 35) to examine various factors contributing to such divergence. We found that immediate feedback about disclosures without any ability to control the disclosures evoked feelings of oversharing. Moreover, deviation from specified settings did not always signal privacy violation; it was just as likely that settings prevented information disclosure considered permissible in situ. We suggest making feedback more actionable or delaying it sufficiently to avoid a knee-jerk reaction. Our findings also make the case for proactive techniques for detecting potential mismatches and recommending adjustments to disclosure settings, as well as selective control when sharing location with socially distant recipients and visiting atypical locations.

Owing to the ever-expanding size of social and professional networks, it is becoming cumbersome for individuals to configure information disclosure settings. We used location sharing systems to unpack the nature of discrepancies between a person’s disclosure settings and contextual choices. We conducted an experience sampling study (N = 35) to examine various factors contributing to such divergence. We found that immediate feedback about disclosures without any ability to control the disclosures evoked feelings of oversharing. Moreover, deviation from specified settings did not always signal privacy violation; it was just as likely that settings prevented information disclosure considered permissible in situ. We suggest making feedback more actionable or delaying it sufficiently to avoid a knee-jerk reaction. Our findings also make the case for proactive techniques for detecting potential mismatches and recommending adjustments to disclosure settings, as well as selective control when sharing location with socially distant recipients and visiting atypical locations.

Our paper will appear at CHI 2014. Read more about our Exposure project.

PlaceAvoider to Appear at NDSS 2014: Privacy in the Age of First-Person Cameras

A new generation of wearable devices (such as Google Glass and the Narrative Clip) will soon make ‘first-person’ cameras nearly ubiquitous, capturing vast amounts of imagery without deliberate human action. ‘Lifelogging’ devices and applications will record and share images from people’s daily lives with their social networks.

We introduce PlaceAvoider, a technique for owners of first-person cameras to ‘blacklist’ sensitive spaces (like bathrooms and bedrooms). PlaceAvoider recognizes images captured in these spaces and flags them for review before the images are made available to applications. PlaceAvoider performs novel image analysis using both fine-grained image features (like specific objects) and coarse-grained, scene-level features (like colors and textures) to classify where a photo was taken.

Our paper will appear at NDSS 2014. Read more about our ‘Vision for Privacy‘ project.

PETools: Workshop on Privacy Enhancing Tools (July 9)

Indiana University hosted the interactive and thought-provoking PETools workshop, chaired by Prof. Apu Kapadia and held in conjunction with PETS 2013. The goal of this workshop was to discuss the design of privacy tools aimed at real-world deployments. This workshop brought together privacy practitioners and researchers with the aim to spark dialog and collaboration between these communities. We thank the authors and attendees for a successful workshop! Please check out the program (with links to the abstracts)!

Apu Kapadia Receives NSF CAREER Award

Prof. Apu Kapadia’s award from the National Science Foundation (NSF) is titled CAREER: Sensible Privacy: Pragmatic Privacy Controls in an Era of Sensor-Enabled Computing. From the press release: Kapadia will receive $550,887 over the next five years to advance his work in security and privacy in pervasive and mobile computing. Kapadia’s grant will allow him to pursue development of reactive privacy mechanisms that he said could have a profound and positive societal impact by not only helping people control their privacy, but also potentially increasing their participation in sensor-enabled computing. “People need only care about the subset of data and usage scenarios that have the potential to violate their privacy, and this reduces the amount of data to which they must regulate access,” he said. “And people make better decisions concerning such access when these decisions are made in a context where they know how their data is being used.”

Prof. Apu Kapadia’s award from the National Science Foundation (NSF) is titled CAREER: Sensible Privacy: Pragmatic Privacy Controls in an Era of Sensor-Enabled Computing. From the press release: Kapadia will receive $550,887 over the next five years to advance his work in security and privacy in pervasive and mobile computing. Kapadia’s grant will allow him to pursue development of reactive privacy mechanisms that he said could have a profound and positive societal impact by not only helping people control their privacy, but also potentially increasing their participation in sensor-enabled computing. “People need only care about the subset of data and usage scenarios that have the potential to violate their privacy, and this reduces the amount of data to which they must regulate access,” he said. “And people make better decisions concerning such access when these decisions are made in a context where they know how their data is being used.”

PlaceRaider Presented at NDSS 2013

We introduce PlaceRaider, a proof-of-concept mobile malware that exploits a smartphone’s camera and onboard sensors to reconstruct rich, 3D models of the victim’s indoor space using only opportunistically taken photos. Attackers can use these models to engage in remote reconnaissance and virtual theft of the victims’ environment. We substantiate this threat through human subject studies. Our paper was presented at the 20th Annual Network & Distributed System Security Symposium (NDSS) 2013. For more details, see the PlaceRaider project page.

We introduce PlaceRaider, a proof-of-concept mobile malware that exploits a smartphone’s camera and onboard sensors to reconstruct rich, 3D models of the victim’s indoor space using only opportunistically taken photos. Attackers can use these models to engage in remote reconnaissance and virtual theft of the victims’ environment. We substantiate this threat through human subject studies. Our paper was presented at the 20th Annual Network & Distributed System Security Symposium (NDSS) 2013. For more details, see the PlaceRaider project page.

FRSP Award: ‘Vision for Privacy’

Profs. David Crandall and Apu Kapadia have been awarded $50K of seed funding through the Faculty Research Support Program (FRSP) for their project titled Vision for Privacy: Privacy-aware Crowd Sensing using Opportunistic Imagery. A variety of powerful and potentially transformative `visual social sensing’ applications could be created by aggregating together data from cameras and sensors on smartphones and emerging technologies such as augmented reality glasses (e.g., by Google Project Glass). These applications, however, raise major privacy concerns because of the large amount of potentially private data that could be captured. This project investigates techniques to provide guarantees on privacy in the context of such applications. For more details, see our project page.