Paper ‘Challenges in Transitioning from Civil to Military Culture: Hyper-Selective Disclosure through ICTs’ to be Presented at CSCW ’18

A critical element for a successful transition is the ability to disclose, or make known, one’s struggles. In our paper ‘Challenges in Transitioning from Civil to Military Culture: Hyper-Selective Disclosure through ICTs’ we explore the transition disclosure practices of Reserve Officers’ Training Corps (ROTC) students who are transitioning from an individualistic culture to one that is highly collective. As ROTC students routinely evaluate their peers through a ranking system, the act of disclosure may impact a student’s ability to secure limited opportunities within the military upon graduation. We perform interviews of 14 ROTC students studying how they use information communication technologies (ICTs) to disclose their struggles in a hyper-competitive environment, we find they engage in a process of highly selective disclosure, choosing different groups with which to disclose based on the types of issues they face. We share implications for designing ICTs that better facilitate how ROTC students cope with personal challenges during their formative transition into the military.

Read more in our CSCW 2018 paper.

Paper ‘To Permit or Not to Permit, That is the Usability Question’ Presented at PETS ’17

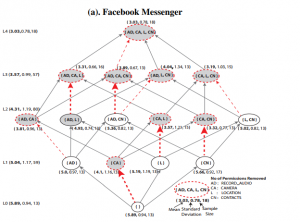

Millions of apps available to smartphone owners request various permissions to resources on the devices including sensitive data such as location and contact information. Disabling permissions for sensitive resources could improve privacy but can also impact the usability of apps in ways users may not be able to predict. In our paper ‘To Permit or Not to Permit, That is the Usability Question: Crowdsourcing Mobile Apps’ Privacy Permissions Settings’ we study an efficient approach that ascertains the impact of disabling permissions on the usability of apps through large-scale, crowdsourced user testing with the ultimate goal of making recommendations to users about which permissions can be disabled for improved privacy without sacrificing usability.

Millions of apps available to smartphone owners request various permissions to resources on the devices including sensitive data such as location and contact information. Disabling permissions for sensitive resources could improve privacy but can also impact the usability of apps in ways users may not be able to predict. In our paper ‘To Permit or Not to Permit, That is the Usability Question: Crowdsourcing Mobile Apps’ Privacy Permissions Settings’ we study an efficient approach that ascertains the impact of disabling permissions on the usability of apps through large-scale, crowdsourced user testing with the ultimate goal of making recommendations to users about which permissions can be disabled for improved privacy without sacrificing usability.

We replicate and significantly extend previous analysis that showed the promise of a crowdsourcing approach where crowd workers test and report back on various configurations of an app. Through a large, between-subjects user experiment, our work provides insight into the impact of removing permissions within and across different apps. We had 218 users test Facebook Messenger, 227 test Instagram, and 110 test Twitter. We study the impact of removing various permissions within and across apps, and we discover that it is possible to increase user privacy by disabling app permissions while also maintaining app usability.

Paper ‘Cartooning for Enhanced Privacy in Lifelogging and Streaming Videos’ Presented at CV-COPS ’17

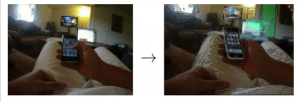

In the paper ‘Cartooning for Enhanced Privacy in Lifelogging and Streaming Videos’, we describe an object replacement approach whereby privacy-sensitive objects in videos are replaced by abstract cartoons taken from clip art. We used a combination of computer vision, deep learning, and image processing techniques to detect objects, abstract details, and replace them with cartoon clip art. We conducted a user study with 85 users to discern the utility and effectiveness of our cartoon replacement technique. The results suggest that our object replacement approach preserves a video’s semantic content while improving its piracy by obscuring details of objects.

In the paper ‘Cartooning for Enhanced Privacy in Lifelogging and Streaming Videos’, we describe an object replacement approach whereby privacy-sensitive objects in videos are replaced by abstract cartoons taken from clip art. We used a combination of computer vision, deep learning, and image processing techniques to detect objects, abstract details, and replace them with cartoon clip art. We conducted a user study with 85 users to discern the utility and effectiveness of our cartoon replacement technique. The results suggest that our object replacement approach preserves a video’s semantic content while improving its piracy by obscuring details of objects.

Paper ‘Was My Message Read?’ Presented at CHI 17

Major online messaging services such as Facebook Messenger and WhatsApp are starting to provide users with real-time information about when precipitants read their messages. This useful feature has the potential to negatively impact privacy as well as cause concern over access to self. In the paper ‘Was My Message Read?: Privacy and Signaling on Facebook Messenger’ we surveyed 402 senders and 316 recipients on Mechanical Turk. We looked at senders’ use of and reactions to the ‘message seen’ feature, and recipients privacy and signaling behaviors in the face of such visibility. Our findings indicate that senders experience a range of emotions when their message is not read, or is read but not answered immediately. Recipients also engage in various signaling behaviors in the face of visibility by both replying or not replying immediately.

Paper ‘Viewing the Viewers’ Presented at CSCW 17

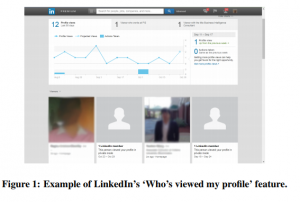

Social networking sites are starting to offer users services that provide information about their composition and behavior. LinkedIn’s ‘Who viewed my profile’ feature is an example. Providing information about content viewers to content publishers raises new privacy concerns for viewers of social networking sites. In the paper ‘Viewing the Viewers: Publishers’ Desires and Viewers’ Privacy Concerns in Social Networks we report on 718 Mechanical Turk respondents. 402 were surveyed on publishers’ use and expectations of information about their viewers, and 316 were surveyed about privacy behaviors and concerns in the face of such visibility. In some instances, such as dating sites, gender differences exist about what information respondents felt should be shared with publishers and required of viewers.

Social networking sites are starting to offer users services that provide information about their composition and behavior. LinkedIn’s ‘Who viewed my profile’ feature is an example. Providing information about content viewers to content publishers raises new privacy concerns for viewers of social networking sites. In the paper ‘Viewing the Viewers: Publishers’ Desires and Viewers’ Privacy Concerns in Social Networks we report on 718 Mechanical Turk respondents. 402 were surveyed on publishers’ use and expectations of information about their viewers, and 316 were surveyed about privacy behaviors and concerns in the face of such visibility. In some instances, such as dating sites, gender differences exist about what information respondents felt should be shared with publishers and required of viewers.

Paper ‘Understanding Physical Safety, Security, and Privacy Concerns of People with Visual Impairments’ Published in IEEE Internet Computing

Various assistive devices are able to give greater independence to people with visual impairments both online and offline. Significant work remains to understand and address their safety, security, and privacy concerns, especially in the physical, offline world. People with visual impairments are particularly vulnerable to physical assault and theft, shoulder-surfing attacks, and being overheard during private conversations. In the paper ‘Understanding the Physical Safety, Security, and Privacy Concerns of People withe Visual Impairments’ we conduct two sets of interviews to find out how people with visual impairments manage these concerns and how assistive technologies can help. The paper also proposes design considerations for camera-based devices that would help people with visual impairments monitor for potential threats around them.

Paper ‘Addressing Physical Safety, Security, and Privacy for People with Visual Impairments’ Presented at SOUPS 2016

People with visual impairments face numerous obstacles in their daily lives. Due to these obstacles, people with visual impairments face a variety of physical privacy concerns. Researchers have recently studied how emerging technologies, such as wearable devices, can help these concerns. In the paper ‘Addressing Physical Safety, Security, and Privacy for People with Visual Impairments’ we conduct 19 interviews with participants who have visual impairments in the greater San Francisco metropolitan area. Our participants’ detailed accounts illuminated three topics related to physical privacy. The first is the safety and security concerns of people with visual impairments in urban environments, such as feared and actual instances of assault. The second being their behaviors and strategies for protecting physical safety. The last being refined design considerations for future wearable devices that could enhance their awareness of surrounding threats.

Paper on ‘Twitter’s Glass Ceiling’ Presented at ICWSM 2016

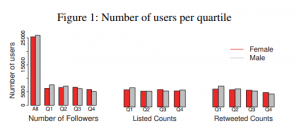

Social media gives the potential for people to freely communicate regardless of their  status. In practice, social categories like gender may still bias online communication, replicating offline disparities. In the paper Twitter’s Glass Ceiling: The Effect of Perceived Gender on Online Visibility we study over 94,000 Twitter users to investigate the association between perceived gender and measures of online visibility. We find that users perceived as female experience a ‘glass ceiling’, similar to the barrier women face in attaining higher positions in companies. Being perceived as female is associated with more visibility for users in lower quartiles of visibility, but the opposite is true for the most visible users where being perceived male is strongly associated with more visibility. Our analysis suggest that gender presented in social media profiles likely frame interactions as well as perpetuates old inequalities online.

status. In practice, social categories like gender may still bias online communication, replicating offline disparities. In the paper Twitter’s Glass Ceiling: The Effect of Perceived Gender on Online Visibility we study over 94,000 Twitter users to investigate the association between perceived gender and measures of online visibility. We find that users perceived as female experience a ‘glass ceiling’, similar to the barrier women face in attaining higher positions in companies. Being perceived as female is associated with more visibility for users in lower quartiles of visibility, but the opposite is true for the most visible users where being perceived male is strongly associated with more visibility. Our analysis suggest that gender presented in social media profiles likely frame interactions as well as perpetuates old inequalities online.

CHI 2016 Paper – Honorable Mention Award!

Our work on detecting computer monitors within photos has been accepted to ACM CHI 2016 and has received an Honorable Mention Award (Top 4% of submissions).

Our work on detecting computer monitors within photos has been accepted to ACM CHI 2016 and has received an Honorable Mention Award (Top 4% of submissions).

Low-cost, lightweight wearable cameras let us record (or ‘lifelog’) our lives from a ‘first-person’ perspective for pur- poses ranging from fun to therapy. But they also capture private information that people may not want to be recorded, especially if images are stored in the cloud or visible to other people. For example, recent studies suggest that computer screens may be lifeloggers’ single greatest privacy concern, because many people spend a considerable amount of time in front of devices that display private information. In this paper, we investigate using computer vision to automatically detect computer screens in photo lifelogs. We evaluate our approach on an existing in-situ dataset of 36 people who wore cameras for a week, and show that our technique could help manage privacy in the upcoming era of wearable cameras.

Read more about it at our Project Page. Here is our paper and the extended technical report

.

Four Papers at CHI 2015

The IU Privacy Lab led by PI Apu Kapadia has four papers accepted at CHI 2015! The first paper titled Privacy Concerns and Behaviors of People with Visual Impairments is a qualitative study that reports on interviews with 14 visually impaired people and suggests new directions for improving the privacy of the visually impaired using wearable technologies. The second paper titled Crowdsourced Exploration of Security Configurations explores the use of crowdsourcing to efficiently determine restricted sets of permissions that can strike reasonable tradeoffs between privacy and usability for smartphone apps.

The IU Privacy Lab led by PI Apu Kapadia has four papers accepted at CHI 2015! The first paper titled Privacy Concerns and Behaviors of People with Visual Impairments is a qualitative study that reports on interviews with 14 visually impaired people and suggests new directions for improving the privacy of the visually impaired using wearable technologies. The second paper titled Crowdsourced Exploration of Security Configurations explores the use of crowdsourcing to efficiently determine restricted sets of permissions that can strike reasonable tradeoffs between privacy and usability for smartphone apps.

The third paper (Note) titled Sensitive Lifelogs: A Privacy Analysis of Photos from Wearable Cameras is a followup study to our UbiComp 2014 paper titled Privacy Behaviors of Lifeloggers using Wearable Cameras. For this Note we analyzed the photos collected in our lifelogging study, seeking to understand what makes a photo private and what we can learn about privacy in this new and very different context where photos are captured automatically by one’s wearable camera. The fourth paper (Note) titled Interrupt Now or Inform Later?: Comparing Immediate and Delayed Privacy Feedback follows up on our CHI 2014 paper titled Reflection or Action?: How Feedback and Control Affect Location Sharing Decisions. This Note explored the effect of providing immediate vs. delayed privacy feedback (e.g., for location accesses). We found that the sense of privacy violation was heightened when feedback was immediate, but not actionable, and has implications on how and when privacy feedback should be provided.